Reimagining AI in UX Design

A Research Case Study on Modern Design Tools

Where It All Started

AI is no longer a tool sitting quietly in the corner.

It’s now the loudest voice in the room.From writing code to generating visuals, tools like

ChatGPT, Claude, and Google Gemini

have become part of everyday workflows.People aren’t just using AI anymore…

they’re depending on it.

But this raised a question in my mind:

If AI is helping everyone…

how well is it actually helping designers?

The Curiosity

As a designer, I didn’t just want to use AI tools.I wanted to understand them.

-

Are they actually solving design problems?

-

Or just creating “good-looking noise”?

-

Are designers in control… or just accepting whatever AI gives?

This curiosity became the seed of the project.

The Goal

Responsibilities

To explore how AI is currently used in UI/UX design workflows

To analyze the usability and effectiveness of existing AI design tools

To identify key UX gaps in AI-powered design experiences

To understand how designers interact with AI during real tasks

To evaluate the balance between automation and user control

To uncover opportunities to improve AI-driven design systems

To redesign an AI tool experience that better supports designers

My Role

UX Designer & Researcher

Conducted initial research on general AI tools and design-specific AI tools

Identified and shortlisted 5 major AI UI tools for analysis

Designed a consistent task scenario for testing across all tools

Performed hands-on testing of each tool across complete workflows

Conducted usability testing with two users (experienced & beginner)

Documented observations, pain points, and usability issues

Captured and analyzed user interactions through screenshots

Performed affinity mapping to identify patterns and themes

Synthesized findings into key insights

Defined problem areas and opportunity spaces for redesign

Project Duration

6 weeks

Understanding the problem

-

Exploration

-

Usability Study

-

Testing

-

Analysis through affinity mapping

-

Insights

Before jumping into specific tools, I wanted to understand the bigger picture.AI was everywhere — from chatbots to code generators — but where exactly do UI/UX tools fit in this ecosystem?

To answer this, I mapped out the entire AI landscape and identified key categories. This helped me narrow down my focus from a broad space into the specific domain of UI/UX design tools.

Exploration

This mapping helped me transition from a broad AI perspective to a focused exploration of UI/UX design tools.

-

Widely used or gaining traction in the design community

-

Ability to generate UI from prompts

-

Covers different approaches (design assist vs full app generation)

-

Accessibility for testing (free/trial versions)

-

Potential real-world usability for designers

Selection Criteria

Initial Tool Analysis (Surface-level understanding)

Tool

Figma Make

Stitch

Lovable

Uizard

Banani

What it does

AI inside Figma

UI from prompts

Full app builder

Wireframe generation

UI generator

First Impression

Familiar + powerful

Fast

Strong automation

Beginner friendly

Experimental feel

Research Approach

Tool Analysis

A detailed evaluation of each tool by analyzing interface, usability, and output through annotated screenshots.

Tool Analysis

User Testing

Observing how users interact with the tools to identify real-world usability issues and pain points.

AI-Assisted

AI tools were used to support analysis, organize observations, and refine insights throughout the research process.

For testing each tool fairly, I used a common prompt and pasted the same in all the 5 AI tools and noted the observation based on 5 workflow stages

Prompt used:

This is a RC car customization website pages where we can choose the desired model of cars and customize them and place order. Could you pls redesign these three pages completely including theme, colors, typography layout, alignment etc.. except the logo ForzoX.

Workflow Stages

Google Stitch AI

Onboarding

What Works Well

-

Simple and intuitive navigation panel makes it easy to explore projects

-

Clear distinction between “App” and “Web” input reduces confusion

-

Minimal UI reduces cognitive overload for first-time users

-

Quick access to templates helps users start faster

-

Smooth entry into dashboard for returning users

Pain Points

-

No direct search for templates limits discoverability

-

Template section requires excessive scrolling due to large card sizes

-

Mode switching options are not clearly positioned

-

Lack of structured onboarding guidance for new users

-

Important features are discoverable only after exploration

Generation

What Works Well

-

Easy modification toolbar

-

Shows the uploaded images

-

Minimal responsive chat box

-

Quick access to templates helps users start faster

-

ability to get more variation

Pain Points

-

Unsatisfied UI screens

-

Unable to hide chat box when not required

-

Unable to compare with previous version after regeneration

-

No undo option

-

Doesn't understand our speech properly

-

Doesn't translate our voice to text

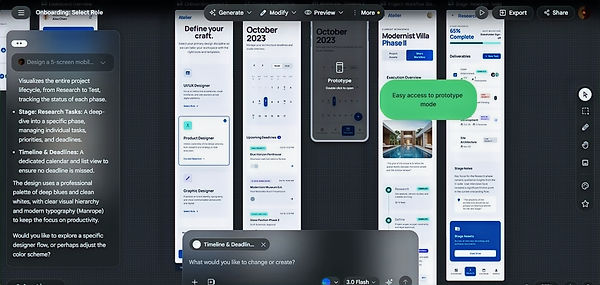

Editing

What Works Well

-

Cab select specific component and edit easily

-

Easy access to prototype mode

-

Option to edit text manually in prototype mode

-

Can easily view different modes

Pain Points

-

Does not change the mentioned changes

-

Unable round edges manually

-

Other features like rounding edges cant be done manually in prototype mode

Export

What Works Well

-

Can be easily exported to different files

Figma Make

Onboarding

What Works Well

-

Simple minimal UI Onboarding

-

Easy choosing of model

Pain Points

-

Unable to choose design style

-

Unable to search for desired template

Generation

What Works Well

-

Responsive prototype

Pain Points

-

Unable to hide chat tab

-

Unable to compare with the existing screens

-

AI didn't understand the prompt

-

Consuming a lot of time to generate

-

Lacks comparability

-

Directly gives the prototype

-

Can't edit in prototype mode

-

Unable to view in different modes

Editing

What Works Well

-

Can edit specific element through AI prompt

-

Can edit each feature manually

Lovable AI

Onboarding

What Works Well

-

Templates available

Pain Points

-

Unable to choose design style

-

Unable to search for desired templates

Generation

What Works Well

-

Ready options for preview, edit and code mode

-

Responsive UI output

-

Can switch between modes

Pain Points

-

Unnecessary loading page

-

Unable to set design system

-

No export button available

-

Unsatisfied UI screens

-

Unable to edit in prototype mode

-

Lacks comparability of different screens

-

Unable to compare output with uploaded screenshots

Editing

What Works Well

-

Option to shift between preview and design mode

-

Can select specific element and edit through AI

-

Side column with all editing tools

Pain Points

-

Occupies a lot of space

Export

Pain Points

-

Unable to export to different files

Uizard AI

Onboarding

What Works Well

-

Clear navigation to products and templates

-

Clear dashboard for templates and projects

-

selection of modes before creation

-

Separate page for templates

Pain Points

-

Unable to upload files in home screen

-

Similar UI as canva dashboard for templates

Generation

What Works Well

-

Screens can be converted into wireframes

-

Has undo redo features

Pain Points

-

Unable to select multiple images at a time from gallery

-

Asks a lot of questions before asking for prompt

-

Asks again to choose between different modes

-

Too much of elements. Increased processing time

-

Poor UI output

-

Unable to redesign multiple screens at a time

-

Ui similar to canva

-

Unable to select and edit through AI

-

No options to switch between different modes

-

Unable to edit in preview mode

Banani AI

Onboarding

What Works Well

-

Clear dashboard for templates and projects

-

Clear options to guide users from where to start

Pain Points

-

Unable to upload files in home screen

-

Similar UI as canva dashboard for templates

Generation

What Works Well

-

Able to change the theme and color manually

Pain Points

-

UI layout similar in Figma

-

Incomplete task

-

Unable to compare with the uploaded screenshot

-

No undo option

Editing

What Works Well

-

Able to edit certain features manually

Pain Points

-

Unable to edit manually in prototype mode

-

No option to switch between different modes

-

Unable to prototype manually

Export

What Works Well

-

Can be exported easily to different files

User Testing

To validate the observations from tool analysis, I conducted usability testing with two users having different levels of design experience.

Participants

Jaideep

Industrial Design Student

A UI/UX design student with prior experience in design workflows and familiarity with a few AI-powered tools.

Athul Krishnav

Industrial Design Student

A beginner with no prior experience in UI/UX or exposure to AI tools, representing a first-time user perspective.

Task Given

Participants created their own prompts based on their design thinking and expectations. These prompts were then used across all selected AI tools to ensure consistency in evaluation while capturing differences in tool behavior and output quality.

Observations

Observations were recorded based on key usability and performance factors such as

-

Prompt-based interaction created uncertainty, with users unsure how detailed or structured their inputs should be.

-

AI-generated outputs were often visually appealing but lacked functional depth or real-world usability.

-

Limited control over iterations made it difficult for users to refine or steer the generated designs effectively.

-

Editing capabilities felt restrictive, forcing users to rely heavily on regeneration instead of modification.

-

Navigation and workflow across tools were inconsistent, leading to confusion during task progression.

-

Lacked the freedom to edit selected components manually. Only text could be altered

-

Only few tools have the ability to compare screens but with restrictions

-

Some tools prioritized speed over accuracy, resulting in quick but less relevant outputs.

-

Lack of structured design workflows (research → ideate → test) made the tools feel incomplete for end-to-end usage.

-

Export options and handoff readiness were unclear or limited in certain tools.

-

Beginners felt overwhelmed, while experienced users felt constrained by lack of precision and control.

Affinity Mapping

Combining insights from user testing and detailed tool analysis, affinity mapping was used to make sense of recurring patterns and underlying issues.

Insights

Limited Editing & Control Breaks the Design Process

Users were unable to fully modify or refine generated designs due to missing editing tools, lack of undo functionality, and restricted control over design systems.

AI Struggles to Accurately Interpret User Intent

AI tools often failed to clearly understand prompts, generating irrelevant or unexpected outputs. This created a gap between user intent and system response, reducing trust and increasing effort in refining results.

Visual Output Lacks Consistency and Practical Usability

While outputs were sometimes visually appealing, users experienced inconsistencies across screens, unclear UI structures, and missing elements like icons or assets, making designs less usable in real-world scenarios.

Poor Workflow Navigation Increases Cognitive Load

Users faced difficulties navigating between screens, previewing designs, and managing multiple outputs. Excessive scrolling and lack of clear structure made the overall experience fragmented and inefficient.

Lack of Iteration & Comparison Limits Design Exploration

The absence of version comparison and iteration tracking prevented users from evaluating different outputs effectively, limiting their ability to refine and evolve designs.

Interface-Level Decisions Impact Usability

UI issues such as persistent chatboxes and poorly placed templates interfered with the user experience, reducing focus and workspace efficiency.

Missing Core Features Restrict End-to-End Usability

Key functionalities such as template search, asset generation (icons/images), and conversion to structured outputs (wireframes, sitemaps) were missing, making the tools feel incomplete for a full design workflow.

AI-Assisted Analysis

AI was used as a supporting tool, while all final decisions and interpretations were critically evaluated and validated by the researcher.

Designers using AI-powered UI/UX tools face challenges in controlling and refining generated outputs due to limited editing capabilities, unclear workflows, and inconsistent AI interpretations, making it difficult to create usable, structured, and production-ready designs efficiently.

Problem Statement

How Might We

Control & Editing

How might we give designers greater control to edit and refine AI-generated designs without needing to regenerate everything?

AI Understanding

How might we help users communicate their intent more clearly so AI can generate more accurate and relevant outputs?

Workflow & Navigation

How might we create a clear and intuitive workflow that guides users from idea to final design seamlessly?

Output Quality & Usability

How might we give designers greater control to edit and refine AI-generated designs without needing to regenerate everything?

Iteration & Exploration

How might we enable users to easily compare, iterate, and evolve multiple design versions?

Feature Completeness

How might we integrate essential design features like assets, templates, and multi-screen handling into a unified experience?

Opportunity Areas

Control & Editing

💡 Opportunity

Enable precise control over AI-generated designs.

Features

-

Direct element-level editing

-

Edit without regenerating

-

Undo & history timeline

-

Edit within prototype mode

Guided AI Interaction

💡 Opportunity

Reduce confusion in prompt-based workflows.

Features

-

Guided prompt builder

-

Smart suggestions while typing

-

Predefined templates

-

Visual input selections

Structured Workflow

💡 Opportunity

Provide a clear end-to-end design process.

Features

-

Stage-based workflow (Research → Export)

-

Progress tracking

-

AI suggestions per stage

-

Task-based navigation

Output Quality

💡 Opportunity

Ensure designs are usable, not just visually appealing.

Features

-

Auto-applied design systems

-

Real UI components

-

Multi-screen generation

-

AI usability feedback

Iteration & Versioning

💡 Opportunity

Support continuous design exploration.

Features

-

Version history tracking

-

Side-by-side comparison

-

Branching design options

-

Save & label variations

Asset & Template Ecosystem

💡 Opportunity

Speed up design using ready-made structures.

Features

-

Template library (apps, dashboards, onboarding flows)

-

AI-recommended templates based on prompt

-

Search & filter templates by category

-

Save & reuse custom templates

Multi-Format Generation ⭐

💡 Opportunity

Unify all design outputs into one system.

Features

-

Generate wireframes, sitemaps, prototypes

-

Convert between formats

-

Maintain cross-output consistency

Sketch-to-UI ⭐

💡 Opportunity

Bridge sketching and digital design.

Features

-

Draw using tablet/writing pad

-

AI detects layout & components

-

Convert to editable wireframes

-

Hybrid sketch → refine workflow

Starting the Design

(Work In Progress)

The design phase is currently underway, where the identified opportunity areas are being translated into a cohesive product solution.

This includes developing wireframes and interaction flows for a unified AI UX platform that addresses gaps in control, editing, and workflow continuity.

Next Steps

1

Designing a unified AI UX platform based on identified gaps

2

Exploring sketch-to-UI interaction workflows

3

Developing integrated wireframe-to-prototype systems

4

Validating proposed solutions with users

Next Project